SmartComposition: A Component-Based Approach for Creating Multi-Screen Mashups

Authors: Michael Krug, Fabian Wiedemann, Martin Gaedke

Introduction

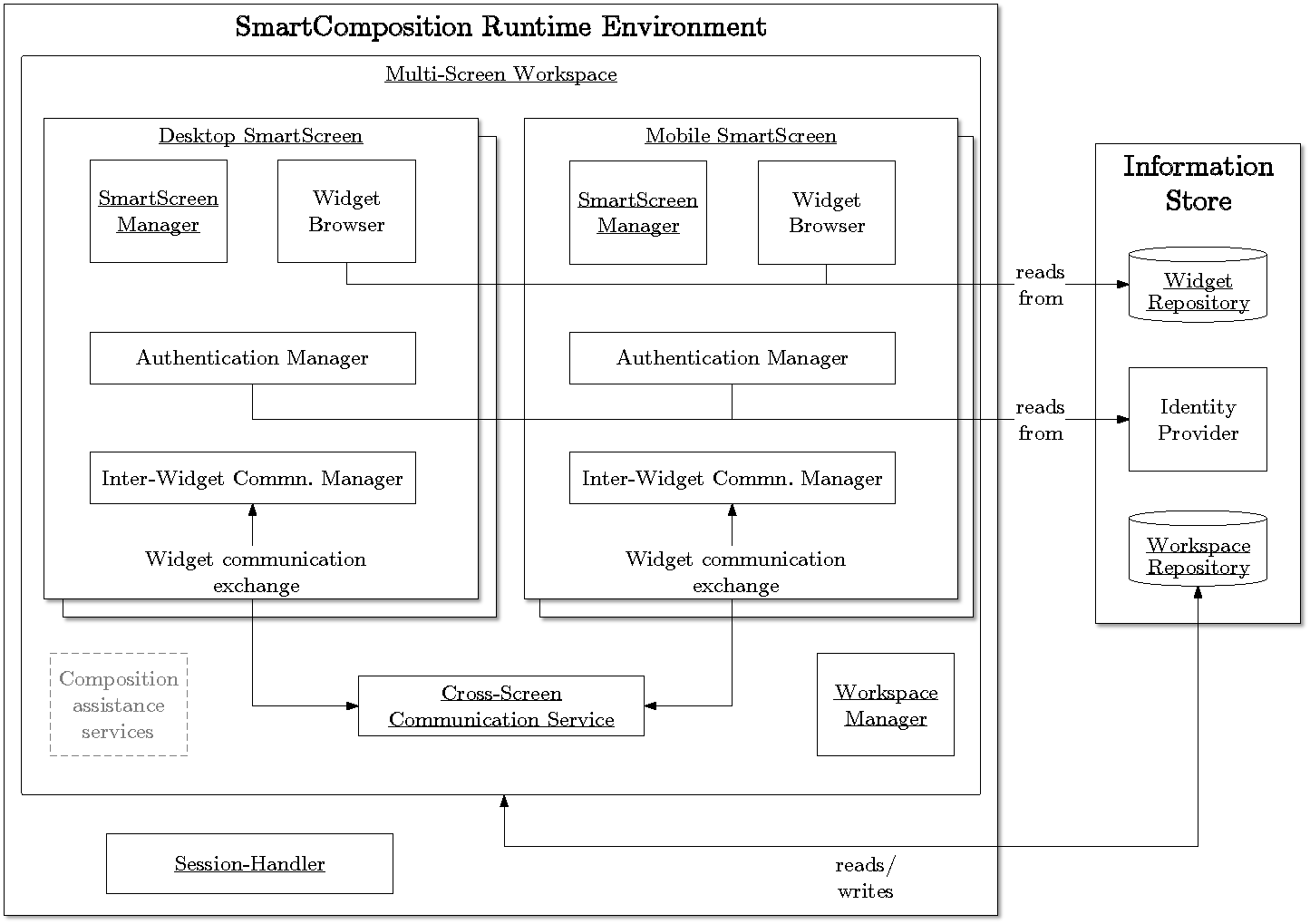

The spread and usage of mobile devices, such as smartphones or tablets, increases continuously. While most of the applications developed for these devices can only be used on the device itself, mobile devices also offer a way to create a new kind of applications: multi-screen applications. These applications run distributed on multiple screens, like a PC, tablet, smartphone or TV. The composition of all these screens creates a new user experience for single as well as for multiple users. While creating mashups is a common way for designing end user interfaces, they fail in supporting multiple screens. This paper presents a component-based approach for developing multi-screen mashups, named SmartComposition. We draw up several scenarios that illustrate the opportunities of multi-screen mashups. From these scenarios we derive requirements SmartComposition needs to comply with. Afterwards, the architecture of our approach is presented and described in detail. A huge challenge we face is the synchronization between the screens. SmartComposition solves this through real-time communication via WebSockets or Peer-to-Peer communication. We present a first prototype and evaluate our approach by developing two different multi-screen mashups. Finally, next research steps are discussed and challenges for further research are defined.

The spread and usage of mobile devices, such as smartphones or tablets, increases continuously. While most of the applications developed for these devices can only be used on the device itself, mobile devices also offer a way to create a new kind of applications: multi-screen applications. These applications run distributed on multiple screens, like a PC, tablet, smartphone or TV. The composition of all these screens creates a new user experience for single as well as for multiple users. While creating mashups is a common way for designing end user interfaces, they fail in supporting multiple screens. This paper presents a component-based approach for developing multi-screen mashups, named SmartComposition. We draw up several scenarios that illustrate the opportunities of multi-screen mashups. From these scenarios we derive requirements SmartComposition needs to comply with. Afterwards, the architecture of our approach is presented and described in detail. A huge challenge we face is the synchronization between the screens. SmartComposition solves this through real-time communication via WebSockets or Peer-to-Peer communication. We present a first prototype and evaluate our approach by developing two different multi-screen mashups. Finally, next research steps are discussed and challenges for further research are defined.

Demo

In the demo we are presenting our first prototype for media enrichment on distributed displays. We are using a video clip of the German newscast “tagesschau”, which is annotated with metadata. The video can be displayed on a large display like a PC and related additional content on the same display and on a smaller one like a tablet. This will demonstrate the real time synchronization between both displays. Further on, the demo illustrates the selective information presentation by showing additional content only on the display where the corresponding SmartTile is present.

The demonstration of our approach consists of two parts: The first page contains a video SmartTile, which is playing a sample clip and is publishing various events, and other SmartTiles for displaying relevant information. The second page can be used on another device such as a smartphone and will display only related content depending on the SmartTiles you have added.

Another scenario addresses the enhancement of a presentation by distributing additional information to multiple secondary screens. As an example, a professor is giving a talk in front of many students. While showing slides of his presentation via a projector, the students receive further information on their mobile devices. Thus, the students can read and see details about the current topic without messing up the slides with a lot of text. Furthermore, the students can use the additional information, such as wikipedia articles or further notes, on their laptops as a script. The professor can keep his slides clean, while the students get a synced script related to the current lecture.

Browser Support

The prototype is supported by the following browsers:

- Google Chrome 18+

- Internet Explorer 10

- Opera 12.1+

- Safari 6+

- Google Chrome on Android

The following browsers do not support the TextTrack-API of HTML5 video tag, therefore no events will be published when playing the video.

Nevertheless, the manual publishing and automatic synchronization of events from other displays will work.

- Firefox 11+

- Stock Browser on Android 4.1 (Enable WebSockets support in settings.)

- Google Chrome on iOS (Video can not be played.)

- Safari on iOS (Video can not be played.)